Abstract

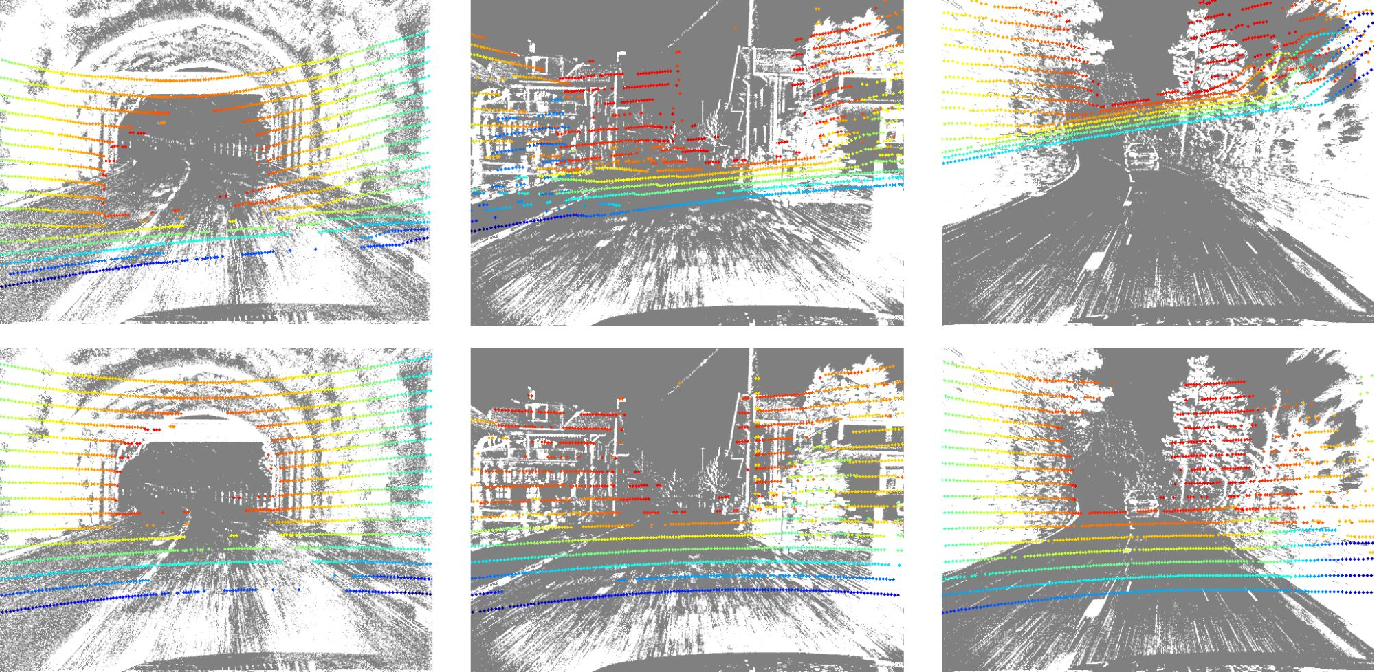

Despite the increasing interest in enhancing perception systems for autonomous vehicles, the online calibration between event cameras and LiDAR — two sensors pivotal in capturing comprehensive environmental information — remains unexplored. We introduce MULi-Ev, the first online deep learning-based framework tailored for the extrinsic calibration of event cameras with LiDAR. This advancement is instrumental for the seamless integration of LiDAR and event cameras, enabling dynamic real-time calibration adjustments that are essential for maintaining optimal sensor alignment amidst varying operational conditions. Rigorously evaluated against the real-world scenarios presented in the DSEC dataset, MULi-Ev not only achieves substantial improvements in calibration accuracy but also sets a new standard for integrating LiDAR with event cameras in mobile platforms. Our findings reveal the potential of MULi-Ev to bolster the safety, reliability, and overall performance of event-based perception systems in autonomous driving, marking a significant step forward in their real-world deployment and effectiveness.

Citation

@InProceedings{Cocheteux_2024_CVPR,

author = {Cocheteux, Mathieu and Moreau, Julien and Davoine, Franck},

title = {MULi-Ev: Maintaining Unperturbed LiDAR-Event Calibration},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {June},

year = {2024},

pages = {4579-4586}

}